- Resources

- A Practical Guide to Enterprise AI Implementation in CX

A Practical Guide to Enterprise AI Implementation in CX

Every enterprise CX leader has heard the pitch. A new AI platform. A new LLM powering a GenAI solution. An agentic framework that promises to automate 80% of interactions before Q4.

And yet, most enterprise AI deployments stall. Pilots run for months. Use cases get quietly abandoned. The contact center remains exactly as it was — except now with a costly AI layer nobody fully trusts.

Usually, the challenge isn’t the technology – it’s how quickly and effectively you can implement it and start seeing results.

Over the past few years, Ozonetel has deployed AI-powered CX solutions across some of India’s most demanding enterprises — a DTH operator serving 12 million subscribers, a leading stock broking platform, an NBFC with 22,000 agents, and a state government delivering 580+ public services. What we’ve learned across these deployments is worth putting on record — because too many enterprises are making the same avoidable mistakes.

In this article, we will explore:

- 1.Why Enterprise AI Deployments Fail Before They Start

- 2.The Four Pillars of Successful Enterprise AI in CX

- 3. AI Guardrails: The Foundation of Trust at Scale

- 4. Implementation Prerequisites That Actually Move the Needle

- 5.Making Each AI Capability Work

- 6.Case Studies: What This Looks Like in Practice

- 7.Where Enterprises Stand in the AI Journey

- 8. The Ozonetel Approach: Outcome Driven & Consultative

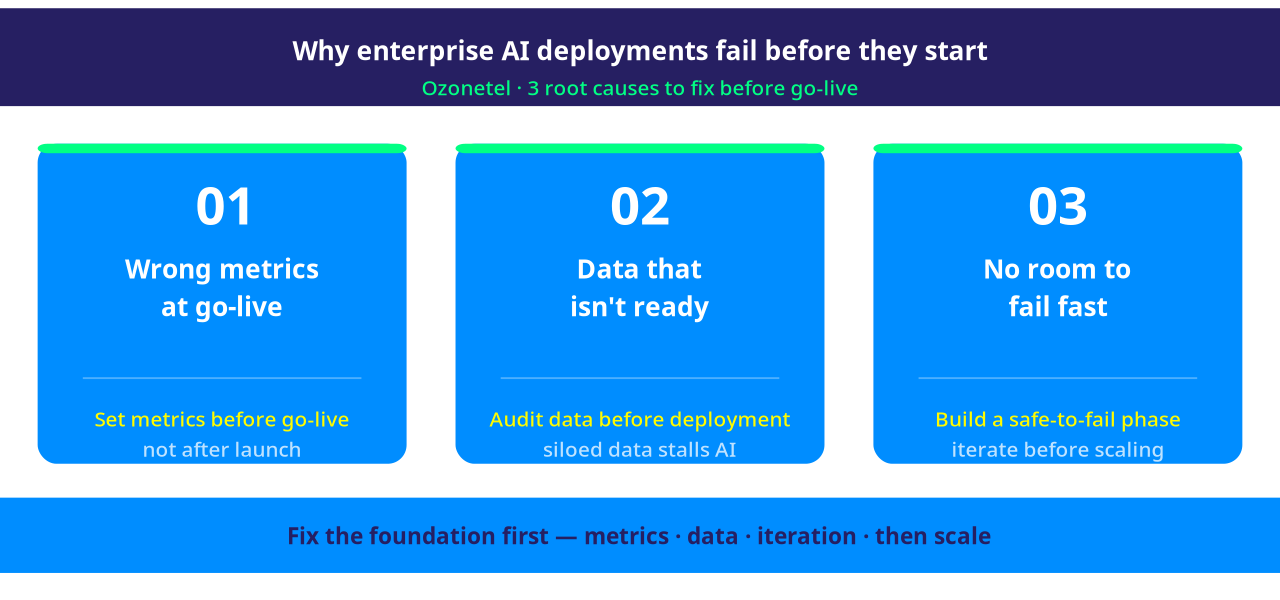

Why Enterprise AI Deployments Fail Before They Start

The most common failure isn’t a bad AI model—it’s misaligned expectations.

Enterprises often treat AI like a system upgrade: deploy, switch on, expect results. But AI in CX is not a plug-and-play tool. It’s a capability build.

And capability builds require a fundamentally different approach: clear objectives, process alignment, training, and continuous iteration to actually drive outcomes.

In this regard, three things consistently derail deployments before they deliver value.

1.Set the right metrics before go-live. If you can’t define what “working” looks like before launch, you won’t know if it’s failing after. AI metrics can’t mirror human benchmarks — an AI resolving 50% of conversations in month one, with the rest transferred to agents, isn’t underperforming. It’s progressing. Calibrate success to what AI can achieve at each stage. Define it in phases, not absolutes.

2.Data that isn’t ready. Your AI is only as good as the data it can read — and in most enterprises, critical data sits locked in systems that don’t talk to each other. CRMs, backend databases, product catalogs, transaction records, ticketing systems — each holds a piece of the customer picture. For AI to act on that picture, every relevant system must expose data via API, in real time, in a format the AI can consume. This isn’t just an integration task. It’s an interoperability mandate. Without it, even the most capable model is working with an incomplete view — and incomplete views produce wrong answers.

3.No room to fail fast. Some use cases won’t work. The enterprises that succeed are the ones with the organizational tolerance to identify a failing use case early, pause it, and move to the next one — without treating it as a program failure. Piloting with volume-percentage routing (sending 5–10% of traffic to the AI before full deployment) gives this safety valve.

Data should be exposed to API and AI should be able to read the data from systems.

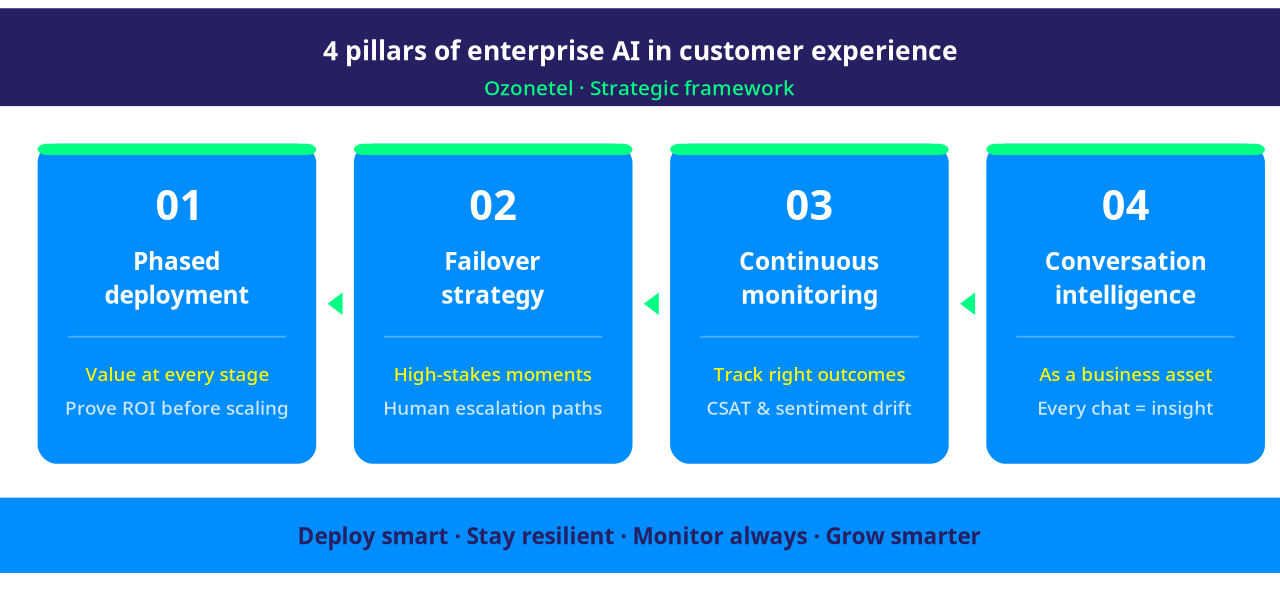

The Four Pillars of Successful Enterprise AI in CX

Based on Ozonetel’s deployment experience, successful enterprise AI programs consistently get four things right.

1. Phased Deployment With Demonstrated Value at Every Stage

No enterprise should deploy AI across all its CX operations simultaneously. The most successful programs we’ve seen run in three phases: a contained pilot on one high-volume use case, a measured expansion across related workflows, and — only once both phases have demonstrated ROI — a broader transformation program.

Cloud migration was fundamentally a where problem — the same workloads, running on different infrastructure. AI deployment is a what and how problem. You’re not moving existing processes to a new environment; you’re redesigning how decisions get made, how customers get served, and how your teams work.

2. Design with Failover Strategy for High-Stakes Interactions

High-stakes customer interactions demand reassurance, not rigid automation. Designing with a failover strategy means knowing when to step back and let a human take over. When conversations become complex or sensitive, a smooth handoff—without losing context—helps ensure effective communication and builds trust.

The goal isn’t to contain every conversation within AI. It’s to ensure that when a human needs to step in, they step in informed — and the customer should simply feel heard, helped, and never passed around.

3. Continuous AI Monitoring for Right Outcomes

Deploying AI is not a set-and-forget operation. An that performs well in week one may degrade as customer language patterns shift, product offerings change, or edge cases accumulate.

Enterprise-grade deployments need automated quality assurance — systems that continuously score AI conversations against human audit standards, flag fatal errors in real time, and give operations teams visibility into what is and isn’t resolving. At Ozonetel, we’ve seen QA scoring move from sentiment-and-topic dashboards to resolution-focused: did the customer’s problem actually get solved?

Mature programs run two parallel tracks: AI monitoring AI — evaluating every bot conversation against resolution criteria, hallucination thresholds, and compliance parameters — and AI monitoring humans, scoring agent interactions against quality benchmarks without manual sampling. Different parameters, same outcome focus. That’s what separates programs that scale from pilots that stall.

4. Conversational Intelligence as a Business Asset

The conversation data from a CX operations is one of the most underused assets in any enterprise. It contains buying patterns, churn signals, product feedback, competitive intelligence, and missed opportunities — all in raw form, waiting to be extracted.

Modern conversational AI insights goes beyond dashboards. The most advanced deployments now allow business users to directly query conversation data: “What are the top reasons customers aren’t repurchasing?” or “What objections are my agents encountering most?” — answered in seconds from analyzed call and chat logs. This transforms every conversation into a strategic input for product, marketing, and growth.

AI Guardrails: The Foundation of Trust at Scale

No enterprise can afford AI operating without control.

Fatal Error Criteria: Automate detection and generate real-time alerts when mission-critical failures occur.

Live Monitoring Dashboards: Instantly highlight anomaly spikes, escalation triggers, and compliance breaches.

Human Escalation on Uncertainty: Never leave a customer stranded – increase agent intervention for edge cases.

Transparent User Disclosure: Always signal bot interaction and compliance standards.

Quarterly Review: Continually assess model performance and regulatory adherence.

Implementation Prerequisites That Actually Move the Needle

Getting enterprise AI right isn’t about jumping straight to deployment — it’s about laying the right foundation in the right sequence.

Step 1 — Get your data ready. Ozonetel’s AI can extract information & derive insights from raw transcripts, unstructured logs, and messy CRM records — but it can only work with data it can reach. Voice transcripts, knowledge bases, legacy logs, CRM records — they need to live in one accessible place. Fragmented, siloed data doesn’t just slow deployment — it stalls it indefinitely. This is where most enterprises lose months without realizing it.

Step 2 — Define what success actually looks like. Once the data foundation is in place, every planned use case needs a measurable business outcome tied to it — CSAT improvement, resolution rate, AHT reduction, or revenue uplift. If success can’t be defined before launch, it can’t be measured after. Ozonetel deployments that clear this step first have delivered up to 25% faster resolution rates and 15% CSAT improvement within the first phase.

Step 3 — Choose the right use cases to start with. Not everything should be automated at once. Prioritize where you have the right data set, the outcome metric is clearest, and the cost of a failed experiment is lowest. Start there, learn fast, and expand only what works. The goal at this stage isn’t scale — it’s proof.

Step 4 — Integrate without disrupting what’s working. AI must fit into your existing ecosystem — cloud, on-prem, or hybrid — without breaking live operations. Map your current AI maturity, identify integration constraints early, and adopt a phase-wise migration strategy that de-risks each step before committing to the next.

Making Each AI Capability Work

Most AI programs don’t fail at the strategy level — they fail at the capability level. A voicebot that can’t handle context. An agent assist tool agents ignore. Conversational AI Insights pointed at the wrong metrics. Getting each capability right requires a different set of decisions. Here’s what that looks like in practice.

Agentic Voicebot and Chatbot

True agentic AI — the kind that executes multi-step workflows, makes decisions mid-conversation, and recovers from unexpected inputs — requires a different build philosophy than a scripted decision tree dressed up with NLU.

Use domain-specific agents, not one general agent. One agent handling billing, technical support, upgrades, and complaints will be mediocre at all of them. The better architecture is multiple specialized agents, each trained on a distinct workflow, orchestrated by a routing layer that hands off based on detected intent.

In Ozonetel’s DTH deployment, separate agents handle channel activation, error resolution, and VAS — each optimized for its domain, with clean handoffs between them.

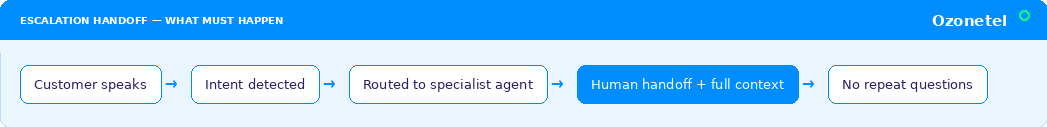

Design escalation as a first-class feature. When a bot transfers to a human, full conversation context must travel with the handoff — so the agent never asks the customer to repeat themselves. This is the most consequential moment in the customer’s experience of your AI program.

For chatbots, multi-intent recognition matters equally — a customer asking about their order and your return policy in the same message should get both answers in a single turn, not two separate flows.

Agent Assist

Agent assist has one of the highest ROI profiles in contact center AI and is one of the most consistently underdone.

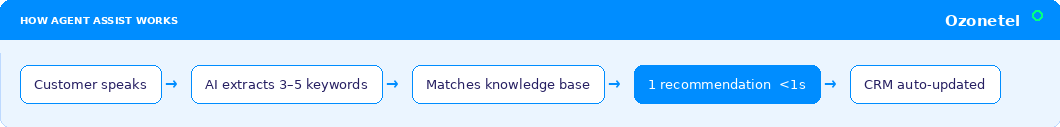

Speed is non-negotiable. A suggestion that surfaces 8 seconds after a customer finishes speaking is useless in a live interaction. The AI must process conversational context and surface a recommendation less than 1 second. This requires low-latency ASR, fast LLM inference, and a knowledge base optimized for retrieval — not a general-purpose document store.

Critical keywords, not just transcripts. Showing the agent, a real-time transcript is not agent assist — it’s a distraction. The AI should extract 3–5 critical keywords from the conversation, map them to the most relevant knowledge base entry, and surface a concise prompt. One clear recommendation, not five options competing for the agent’s attention mid-call.

Paired with automated post-call summaries that update CRM directly, this removes the after-call work that typically consumes 20–30% of handle time.

AI-Powered QA and Voice of Customer Intelligence

Traditional QA audits 2–3% of calls, manually, with a lag that makes the insights close to historical by the time they reach operations. AI-powered QA audits every conversation — but only delivers value if it is calibrated to measure the right things.

Shift from behavior auditing to Validating Resolution.

Did the agent follow the script? Did they use the correct greeting? These are process metrics. Did the customer’s problem actually get resolved? That is the metric that drives retention and NPS. AI QA should be calibrated to resolution focus — with scoring aligned to human audit benchmarks so there is no divergence between what the system flags and what a QA manager would flag on the same call.

At Ozonetel, this shift is also reflected in how fatal errors are handled: AI monitoring runs in parallel with every voicebot conversation, checking continuously against predefined criteria. When a fatal error is detected — a compliance violation, an incorrect customer commitment, a prohibited statement — it flags for immediate human review, not end-of-day batch reporting. The feedback loop must close fast enough to prevent the same error pattern from running through the next thousand calls.

VoC intelligence that business teams actually needs.

Most VoC dashboards require a data analyst to interpret. The more useful model is a queryable AI persona — where a marketing manager asks “what are the top three reasons customers aren’t repurchasing?” and gets a synthesized answer from 10,000 analyzed conversations in seconds. Buying patterns, churn risk signals, product objection mapping, agent performance gaps — all accessible via natural language, without a custom report request. SWOT analysis on customer sentiment. Competitor mentions surfaced from unstructured call data.

At Ozonetel, conversational intelligence has evolved from static dashboards to dynamic querying, with insights surfaced to specific business functions: what the product team needs to see is different from what sales or ops needs to see. Every conversation becomes institutional intelligence – available to every function, on demand.

Case Studies: What This Looks Like in Practice

What AI in customer experience actually looks like—practical, measurable, and already driving results at scale………………………….

Leading DTH Operator: 55% Queries Automated Across 10+ Languages

A DTH operator serving 12+ million subscribers needed to handle customer service in Hindi and 10+ regional languages across channel activations, error resolution, promotional queries, and value-added services — at a scale and accuracy that a traditional IVR couldn’t approach.

Ozonetel built a custom AI orchestration platform — not an off-the-shelf framework — with hot-swappable TTS, ASR, and LLM providers, domain-specific agents per workflow, and a deterministic data layer for zero-hallucination lookups via REST API.

Results: 55% customer queries automated (20% higher than self-service IVR), 88% QA score aligned with human audit benchmarks, and call to insight via real-time analytics — across 6 service touchpoints.

Large NBFC: 15% Efficiency Gain Across 22000 Agents

One of India’s largest NBFCs, running 22,000+ agents across sales, service, and collections, needed AI that could operate across genuinely complex workflows — not just FAQ deflection.

Ozonetel deployed self-service IVR for insurance updates, overdue payments, loan inquiries, and email ID changes, integrated with SugarCRM’s campaign, lead, and case management modules. The agentic AI layer added a revenue intelligence function: analyzing conversations to identify lost opportunities (worth crores flagged from a single process), detect buying patterns, surface churn risk indicators, and score agent performance. Voice AI agents now handle cross-sell outreach to existing customers — a revenue function that previously required human agents working manually sourced lists.

Results: 15% improvement in agent efficiency, 90% first call resolution.

Telangana State Government: Agentic AI for 580+ Public Services

A state government delivering MeeSeva services to millions of citizens — certificates, payments, registrations — was constrained by physical center dependency and fragmented service delivery. Rule-based chatbots had already hit a ceiling: they couldn’t execute multi-step workflows or adapt to varied citizen intents.

Ozonetel deployed a WhatsApp-based agentic AI system that understands intent in English and Telugu, validates eligibility and documents in real time, guides citizens through multi-step service journeys end-to-end, and triggers payments and appointment bookings — escalating to a human agent only when genuinely required.

Results: 3 lakh+ sessions handled per month through a single WhatsApp number, 24/7 access to 580+ services, and significant reduction in physical center footfall.

Sunteck Realty: Smarter Sales Conversations Through AI-Led Insights

Sunteck Realty leveraged an AI-first CX platform that integrated seamlessly with their CRM to scale their sales operations. With AI-powered VoC, they can now analyze every interaction — surfacing patterns in what prospects are asking, where agents are losing momentum, and what’s actually driving conversions. These conversational insights feed directly into sales training and outreach strategy.

Results: Higher conversation quality and improved TAT for lead outreach, leading to accelerated business growth.

Where Enterprises Stand in the AI Journey

From Ozonetel’s vantage point, enterprise CX leaders today sit in one of three stages.

Stage 1 — Cloud complete, AI running. These enterprises have finished cloud migration and are in active AI deployment — running agentic use cases, measuring outcomes, and scaling what works.

Stage 2 — Cloud complete, AI beginning. CX operations are in cloud; AI pilots are just starting. The priority is use-case selection, data infrastructure, and success metric definition before the first voicebot goes live.

Stage 3 — Still in transition. A hybrid of on-premise and cloud CX makes centralized AI difficult to deploy effectively. The path forward is consolidation first — unified CX in cloud — before AI can deliver at scale.

The destination is the same for all three: a unified, AI-augmented CX operation that reduces cost, improves resolution, and generates intelligence that benefits functions well beyond the contact center.

The Ozonetel Approach: Outcome Driven & Consultative

What separates enterprise AI deployments that succeed from those that stall is rarely the AI model. It is the implementation partner’s ability to consult honestly — to say when a use case won’t work based on prior experience, to insist on data readiness before go-live, to define guardrails before it goes live, and to build the monitoring infrastructure that keeps quality from quietly degrading six months post-launch.

Ozonetel’s enterprise AI deployments are built on one principle: show value in a phase, build on it, and move forward with evidence — not promises.

If your organization is evaluating enterprise AI for CX, or a previous deployment didn’t deliver what you expected, talk to our team. The conversation starts with your use case, your data, and what “successful” actually means for your operation.

Ozonetel works with enterprises at every stage.

Frequently Asked Questions

Inbound calls are initiated by customers seeking help, support, or information. Outbound calls are initiated by your business to reach customers or prospects proactively for sales, renewals, payment reminders, appointment confirmations, or follow-ups. The core inbound and outbound call center difference is who starts the conversation and what the intended outcome is.

The core inbound call center vs. outbound call center difference comes down to who initiates the call. Inbound call centers handle incoming customer queries, focusing on resolution speed, service quality, and customer satisfaction. Outbound call centers make proactive calls to customers or prospects, measured by connect rates, conversions, and revenue impact. That single direction — inbound or outbound — determines everything: the team’s purpose, technology stack, agent skills, and the KPIs that define success.

On average:

- For small to mid-sized contact centers: 4 to 8 weeks.

- For large enterprise setups: 2 to 3 months (especially if integrations and QA automation are involved).

Quality Assurance (QA) ensures every call meets your service, compliance, and performance standards. In inbound call centers, QA focuses on resolution accuracy, empathy, first-call resolution, and handle time. In outbound call centers, it covers script adherence, regulatory compliance, persuasion effectiveness, and conversion quality. Modern AI-powered QA tools can automatically audit 100% of calls instead of the 2–5% typically reviewed manually.

A blended call center manages both inbound and outbound calls using the same team and platform. AI-driven routing and real-time workload balancing allow agents to dynamically switch between handling incoming customer queries and making proactive outbound calls. This maximizes agent utilization, reduces idle time, and allows businesses to deliver consistent customer experiences across both service and sales interactions without maintaining two separate teams.

In BPO, inbound processes involve handling customer support, service requests, and inquiries on behalf of clients — measured by FCR, CSAT, and AHT. Outbound processes include lead generation, sales calls, renewals, surveys, payment follow-ups, and collections — measured by connect rates, conversion rates, and revenue per call. Both rely on defined workflows, call center KPIs, and purpose-built contact center technology to meet client performance targets.